Automated Scene Population and Setup

Populating a large environment, such as a city or a village, often requires significant manual labor. CIVIL-AI-SYSTEM addresses this through a population system designed to automate the placement of life within a scene. Users define specific zones—such as houses, workplaces, and item locations—and use population regions to pull from a pool of characters. The system then manages the distribution and assignment of these characters across the designated areas.

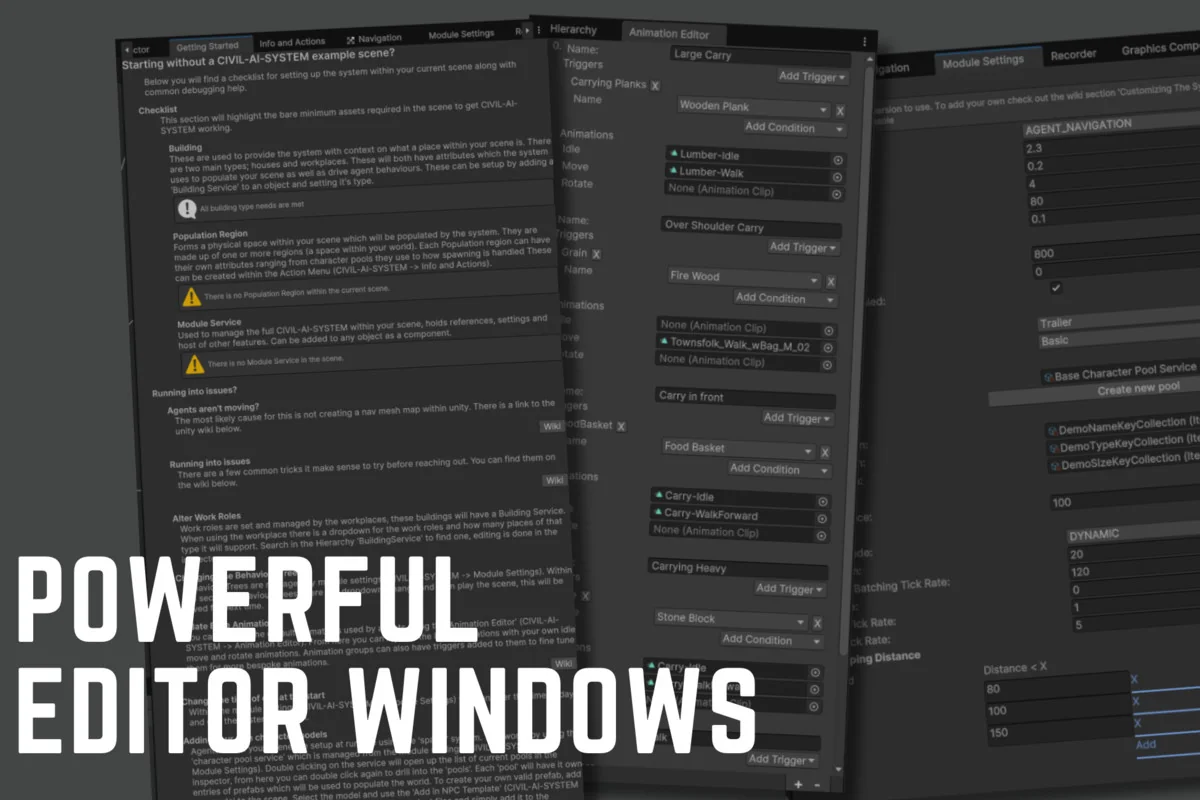

For projects requiring more granular control, the developer has included support for bespoke agents. This allows for the manual placement of specific citizens while still utilizing the broader automated system for the rest of the environment. To streamline the initial implementation, a ‘Getting Started’ checklist helper is built directly into the tool to guide the setup process.

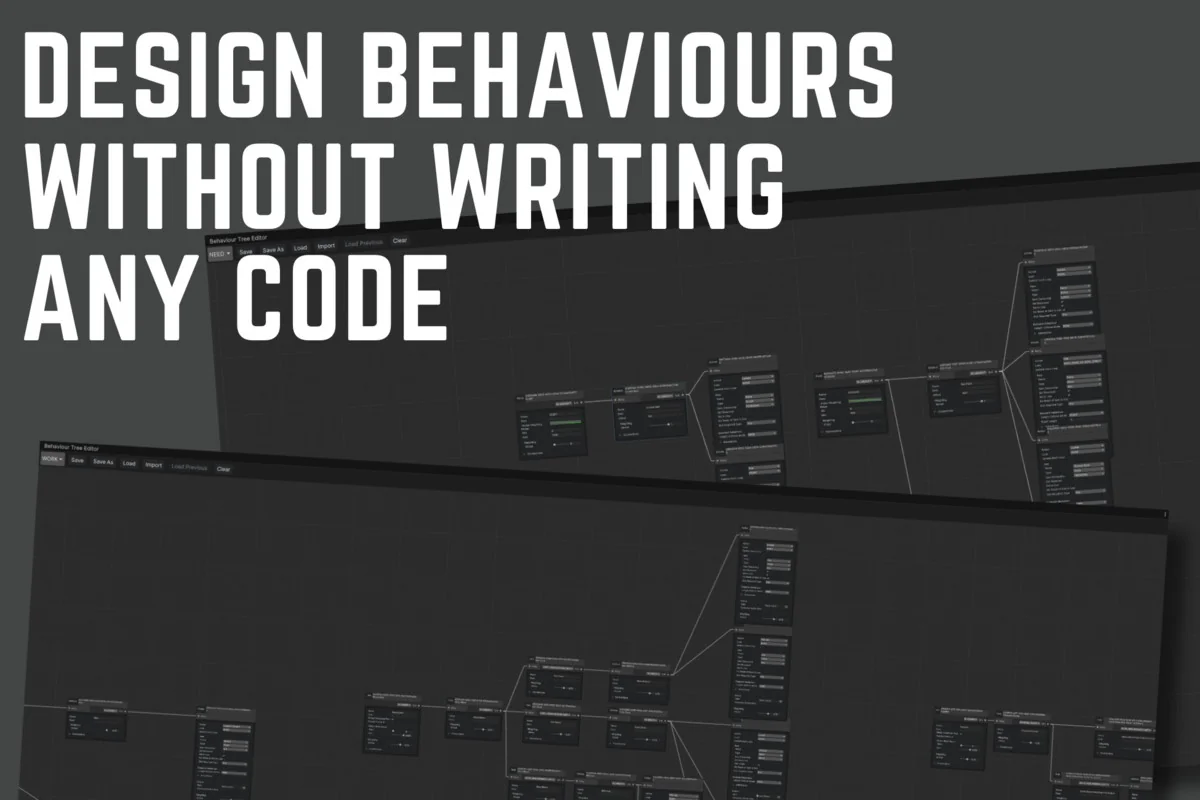

Defining Agent Logic Through Work and Need Systems

The system distinguishes itself by using real-life comparisons to define agent logic rather than relying on abstract graphs or complex code. This approach is split into two primary behavior structures: work behaviors and need behaviors. Work behaviors allow agents to perform specific roles within the environment, and the system supports multiple ways to complete a single task, which increases the robustness of the simulation if one path becomes unavailable.

Need behaviors add a secondary layer of depth, providing agents with personal requirements that drive their actions and add flavor to their routines. These behaviors are designed to be built iteratively, allowing for the gradual addition of complexity as the scene evolves.

Item Interaction and Ownership Mechanics

Interaction in CIVIL-AI-SYSTEM goes beyond simple proximity triggers. The tool features a deep item system where interactions can cause designed side effects, influencing the state of the agent or the world. This is supported by an ownership system, which gives agents a conceptual understanding of what belongs to them. Agents are capable of carrying, storing, and interacting with items in various ways, and these actions are reflected visually through the item system.

Customization is handled through a layered approach. This allows for the creation of different ‘feeling’ regions within a single project, where animations and character types can be adjusted to suit the specific atmosphere of a neighborhood or district.

Visual Cues and Animation Management

To ensure that agent actions are readable to the viewer, the system includes a built-in animation controller. This controller is responsible for providing visual cues that correspond to the agents’ current tasks or needs. By linking behaviors directly to the animation state, the system ensures that the physical movements of the characters align with their internal logic, such as working, carrying an object, or fulfilling a personal need.

Performance and System Stability

The architecture of CIVIL-AI-SYSTEM is optimized to handle hundreds of agents simultaneously, making it suitable for dense urban environments or busy social hubs. To prevent project-breaking interruptions during development, the system utilizes a ‘Quiet Failing’ design. In this setup, if an error occurs, it is logged for the user to review, but the system continues to run, preventing the entire simulation from crashing.

As the tool evolves, the developer has maintained backward compatibility across updates. This ensures that meaningful additions—such as the previously introduced need features and item system reworks—can be integrated into existing projects without requiring a total overhaul of the established logic.

Asset Gallery

Protected download

Access this resource

All resources are 100% manually reviewed to eliminate all risks.