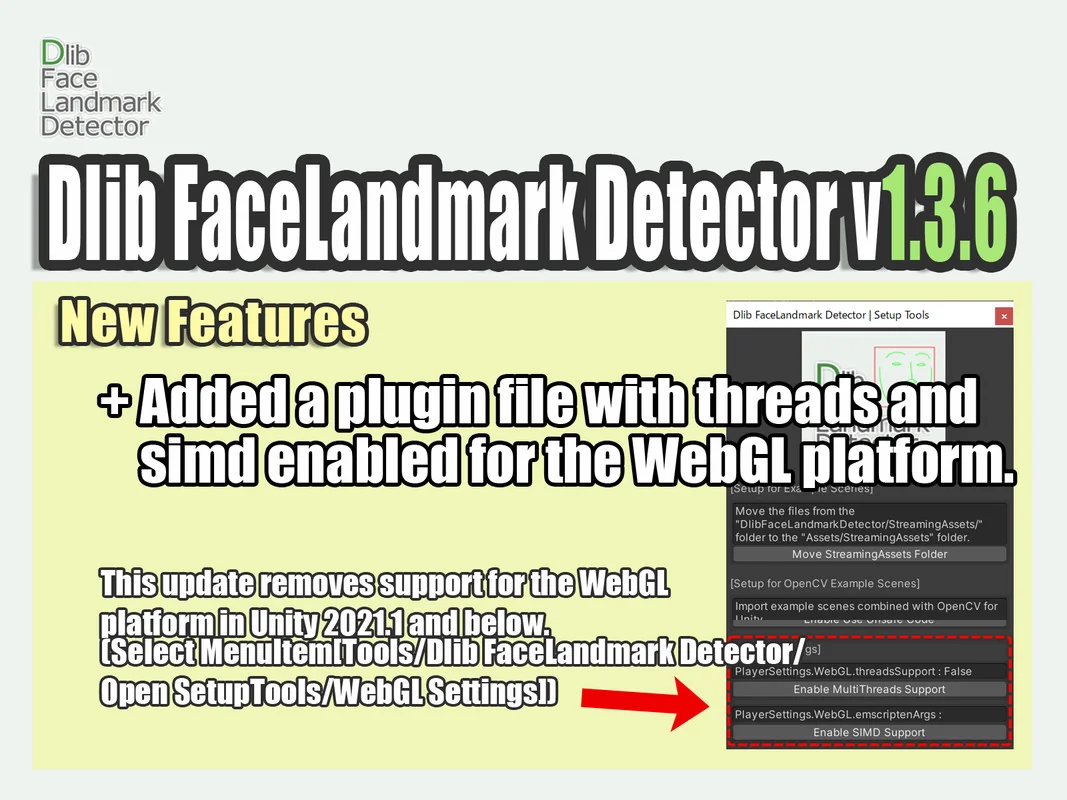

Facial Tracking and Object Detection via Dlib 19.7

Dlib FaceLandmark Detector is a specialized plugin designed to integrate the Dlib 19.7 C++ library directly into the Unity environment. This integration focuses on two primary computer vision tasks: object detection and shape prediction. By leveraging the library’s native capabilities, developers can implement high-performance tracking systems that operate within the engine’s lifecycle. The core of the plugin’s object detection system utilizes a Histogram of Oriented Gradients (HOG) feature set, which is paired with a linear classifier, an image pyramid, and a sliding window detection scheme. This combination is specifically optimized for detecting frontal human faces but can be extended to other objects through specific training processes.

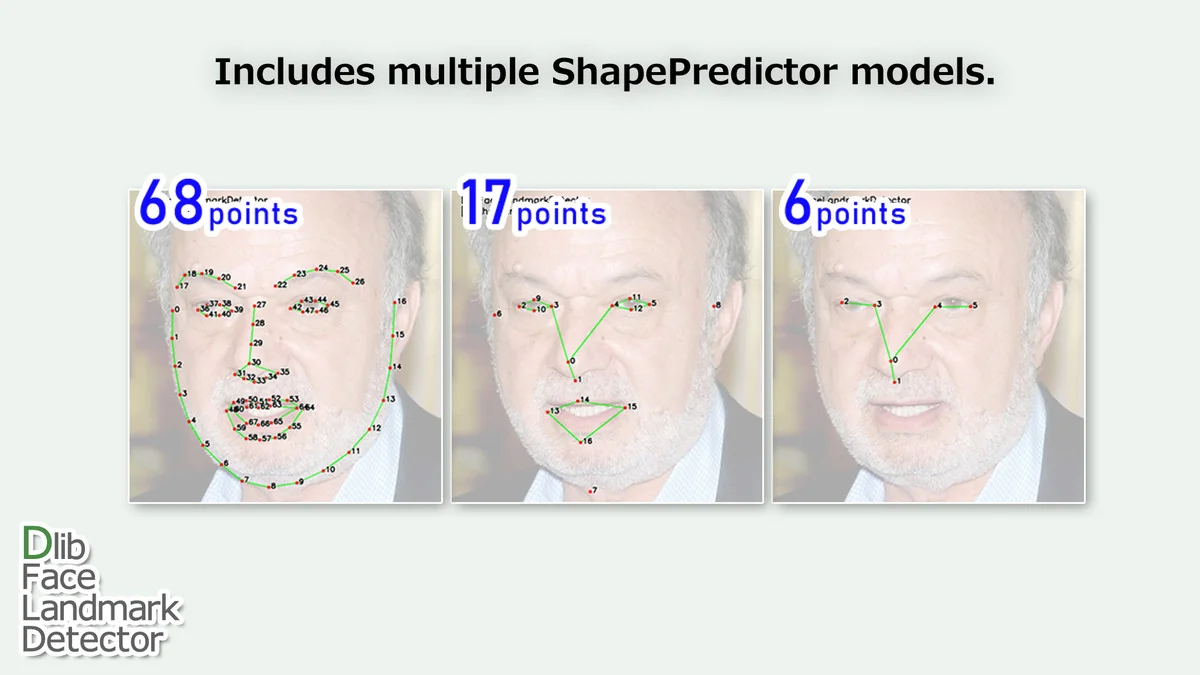

Shape Prediction and Landmark Point Sets

The plugin provides precise shape prediction capabilities based on the regression tree ensemble method. This implementation allows for the detection of specific face landmarks, offering three distinct levels of detail depending on the project’s requirements. Developers can utilize a 68-point model for full facial mapping, a 17-point model for simplified tracking, or a 6-point model for basic orientation and feature placement. These landmark sets are derived from the methodologies outlined in the research paper “One Millisecond Face Alignment with an Ensemble of Regression Trees” by Vahid Kazemi and Josephine Sullivan. This approach is designed for speed, facilitating real-time alignment that is critical for interactive applications.

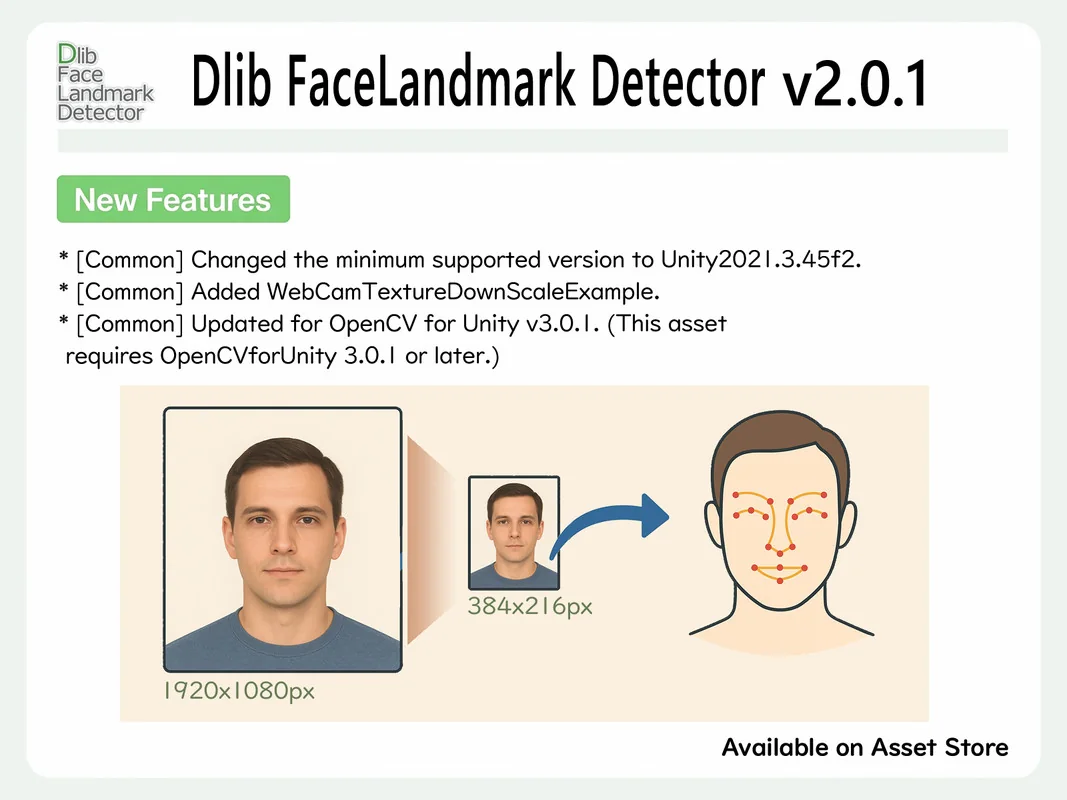

Input Flexibility and Image Processing

The detector is built to handle various data inputs common in Unity development. It natively supports Texture2D and WebCamTexture, allowing for detection from both static image files and live camera feeds. Additionally, it can process raw image byte arrays. For projects requiring more advanced image manipulation, the plugin integrates with OpenCV for Unity. When these two tools are combined, the detector can accept inputs directly from OpenCV’s Mat class. This synergy enables a more complex image processing pipeline where frames can be pre-processed or filtered before being passed to the Dlib detection scripts.

Resource management is a key consideration for performance-heavy tasks like computer vision. The FaceLandmarkDetector class implements the IDisposable interface for the Mat class. This allows developers to utilize the “using” statement in C#, ensuring that memory and native resources are properly disposed of once the detection tasks are complete, preventing memory leaks in high-frame-rate scenarios.

Cross-Platform Compatibility and Visual Scripting

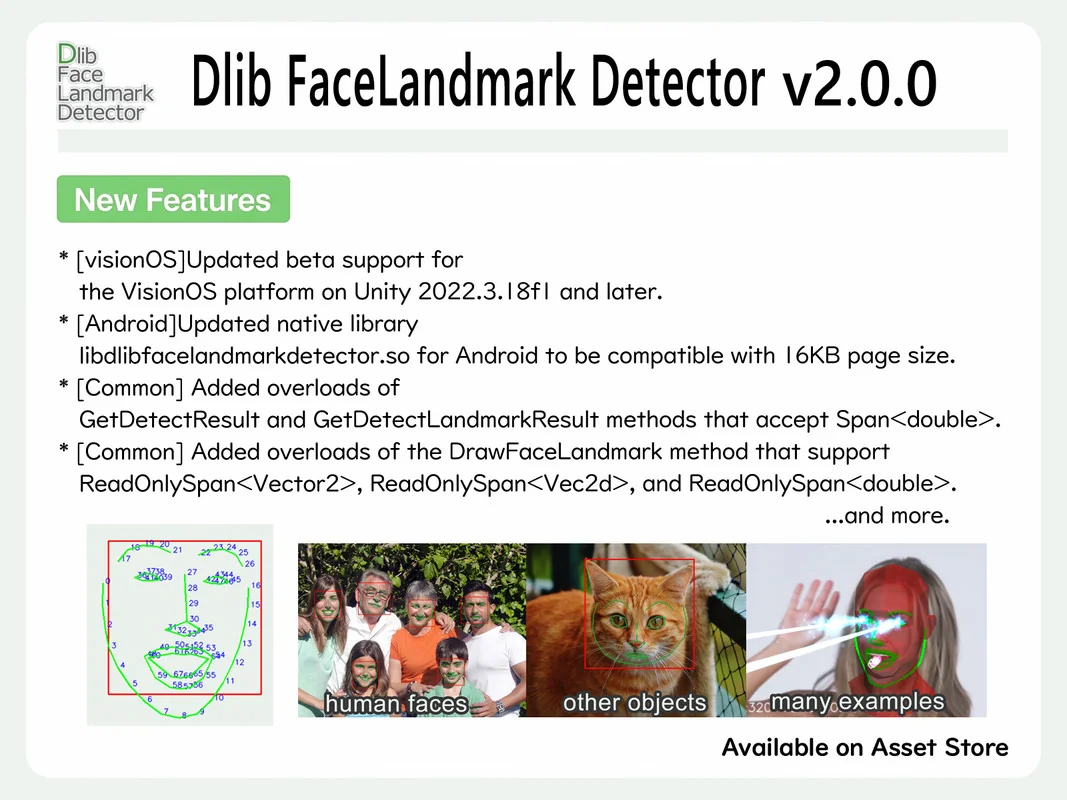

The plugin is designed for a broad range of deployment targets. It supports mobile platforms including iOS and Android, as well as desktop environments like Windows 10 UWP, and standalone builds for Windows, Mac, and Linux. It also provides support for WebGL and ChromeOS. Notably, the plugin includes beta support for visionOS. Beyond the final build, the developer has included support for previews within the Unity Editor, allowing for testing and iteration without the need for constant deployment to a physical device.

For those who prefer node-based logic over traditional coding, the package includes support for Unity’s Visual Scripting. This ensures that the full feature set of the Dlib FaceLandmark Detector—from object detection to shape prediction—can be accessed and controlled through visual graphs. The creator provides specific examples to demonstrate how these integrations are structured within a visual workflow.

Extensibility through Custom Training

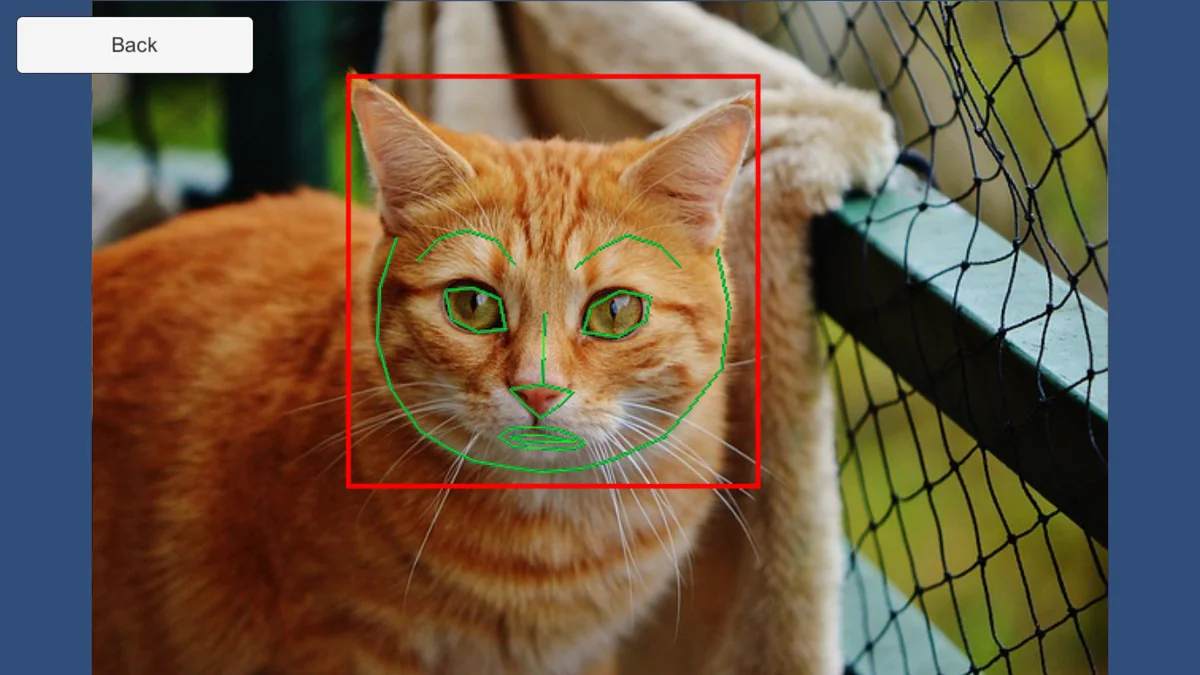

While the plugin is equipped for face detection out of the box, it is not limited to human features. It includes access to Dlib’s machine learning tools, which allow for custom training. Developers can use the Object Detector Training Tool to create models for non-human objects and the Shape Predictor Training Tool to define custom landmark sets. This flexibility makes the plugin suitable for projects involving specialized tracking, such as animal features or industrial components, provided the developer trains the necessary models using the provided Dlib toolset.

Example Scenarios and Practical Implementation

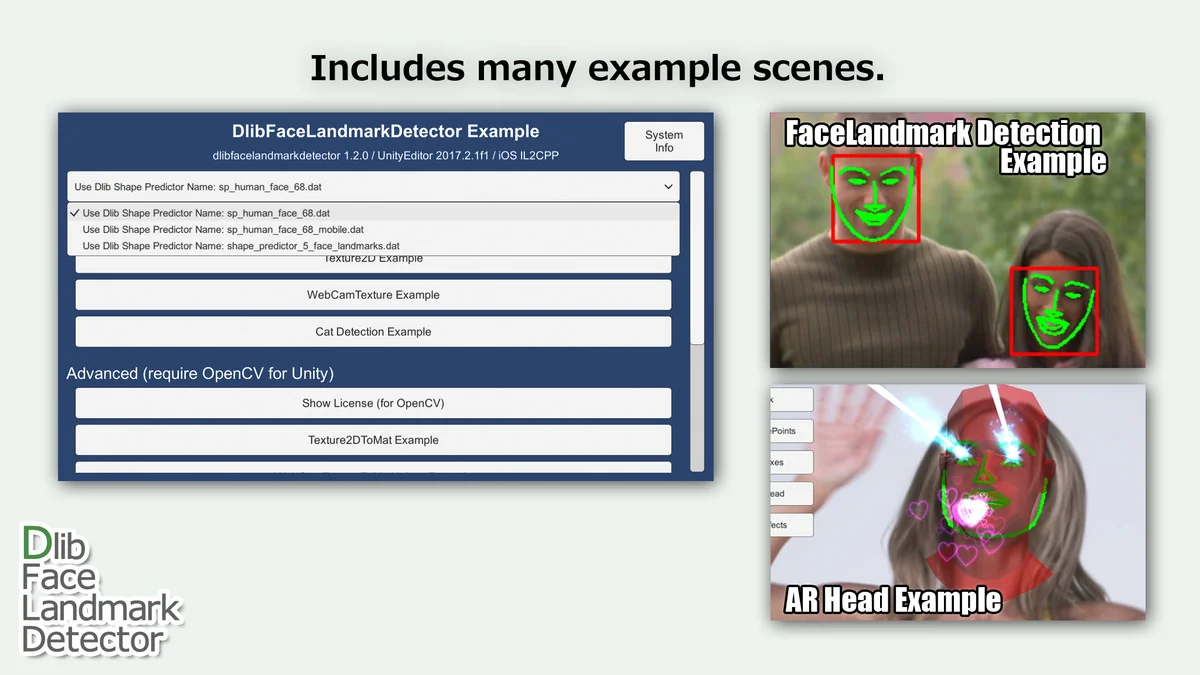

To assist in the development process, the plugin contains a variety of scene files and script codes that illustrate different use cases. These range from basic implementations, such as simple Texture2D and WebCamTexture benchmarks, to more complex setups. Advanced examples demonstrate the integration with OpenCV for Unity, including tasks like WebCamTexture-to-Mat conversion and video capture helpers. One specific advanced scenario included is the ARHead example, which showcases how facial landmark data can be used to drive augmented reality elements or head-tracking logic within a scene.

Preview Images

Protected download

Access this resource

All resources are 100% manually reviewed to eliminate all risks.